Practical State Machines in NetKernel

Following up on my previous post, ROC Hockey, where I introduced our new implementation of Hierarchical State Machines on NetKernel I’ve decided to cover the topic of how they are actually used in practice in an ROC system.

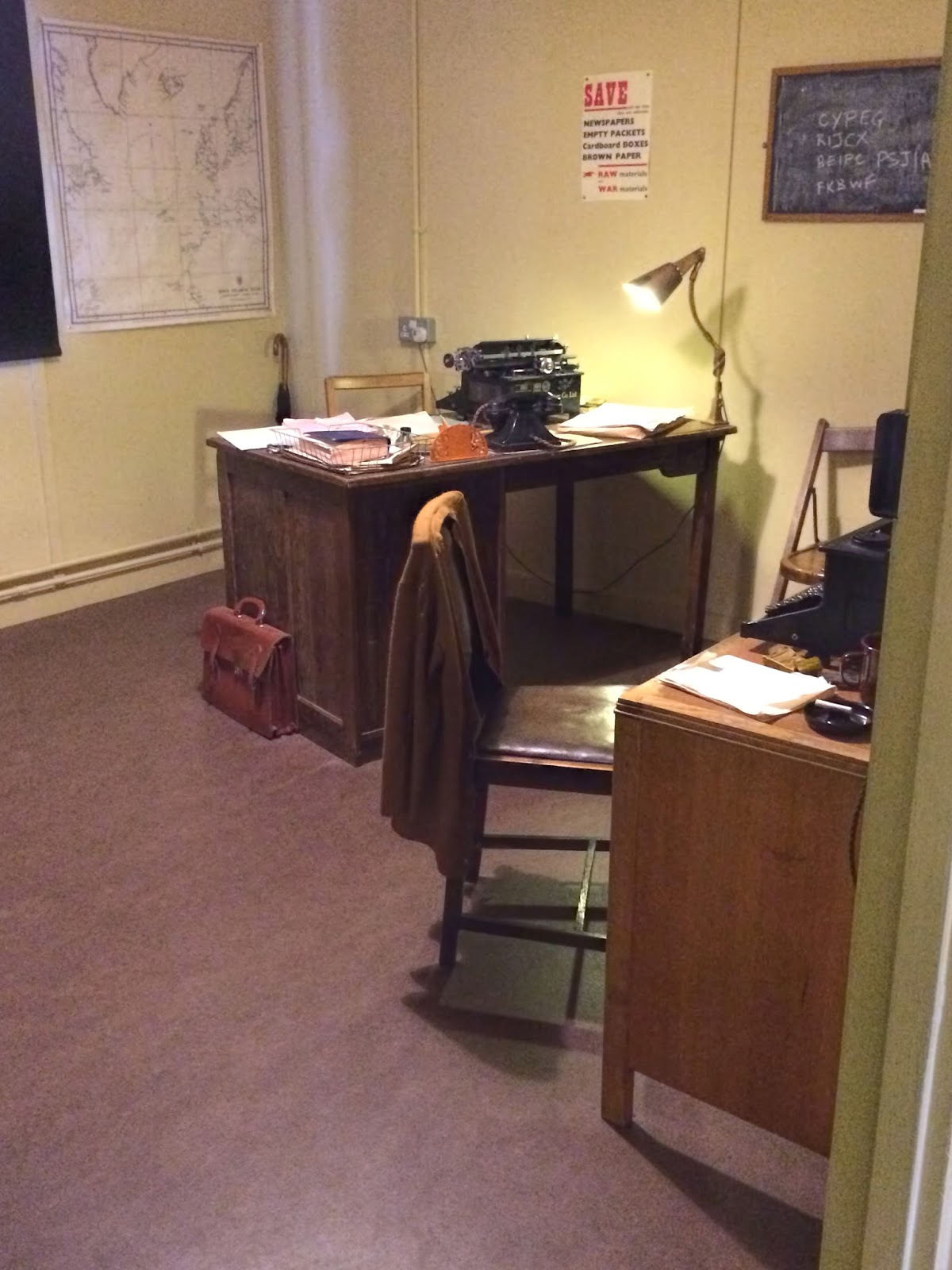

Turing’s Desk in Hut 8, Bletchley Park

Turing’s Desk in Hut 8, Bletchley Park